Camera gets all the exposure

By Kimberly Patch, Technology Research NewsResearchers at Columbia University have come up with a technology designed to eliminate overexposures and underexposures in still and moving pictures.

The technology modifies a camera to change the way its pixels collect light, then uses software to process the results.

Traditionally, the key to improving picture quality has been improving the resolution, or fineness of the image by boosting the number of individual dots, or pixels, a picture contains. Film cameras use light-sensitive crystals, while digital cameras use digital sensors to collect each pixel of light. Today's digital cameras are capable of taking pictures with around three million pixels, while typical 35mm film captures around four million dots of light.

The Columbia researchers, however, are looking at things from a different angle. "There's a whole different dimension to imaging, which is dynamic range -- how many brightness levels do the brightness values of [a] scene map to," said Shree Nayar, professor of computer science at Columbia University.

A digital camera, for example, typically measures about 256 brightness values. Because everything in the real world is being mapped onto these 256 values, digital cameras have a difficult time capturing the subtle range of brightness variations that you find in any real scene, said Nayar. Typical 35mm film captures about four times as many brightness values, but can also be overwhelmed by high contrast scenes.

For instance, "if you take a picture of somebody [in front of a bright window], the person ends up being a silhouette, so either some part of the image ends up being too dark or some part of the image ends up being washed out," said Nayar.

Nayar's high dynamic range technology boosts brightness levels to as many as 4,096. "Because it's measuring a lot more brightness variations... we do have all the subtle variations embedded in [the picture,]" said Nayar. The high dynamic range allows the camera to capture both the details of the shadowed face in front of the window, and the bright scene beyond.

The technology works in a roundabout way.

Camera pixels typically all collect light at the same exposure, or shutter speed. For example, at the common setting of 1/60, each pixel would collect light for one 60th of a second. The exposure setting is of necessity a compromise so that the majority of the picture will be exposed correctly.

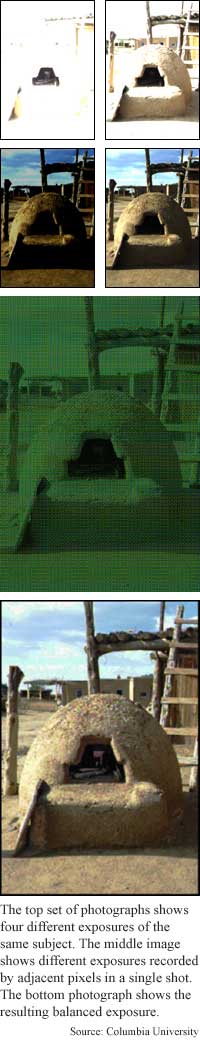

The hardware component of the high dynamic range technology is a mask put over a camera's light gathering crystals or sensors that causes each to take a different exposure than its neighbor. For instance, a group of four pixels will be exposed at four different settings, and that pattern will continue throughout the picture, said Nayar. The effect is a kind of bracketing -- taking a picture at several settings above and below the actual shutter speed setting -- where all the information is captured at the same moment and contained within one photograph.

The interim result is a checkerboard-like photograph, with each tiny square exposed differently than its neighbors. Because information about a pixel can be gained from neighboring pixels, Nayar's high dynamic range software can construct from the data an image that shows optimum exposure rates for all parts of the picture.

In addition, because the finished photograph contains a lot of information, users will be able to change it in many ways. "The idea ... is to make better measurements, and then you can always use [software] to enhance ... spots. But you can't enhance anything if you didn't capture it to begin with," Nayar said.

The researchers have also adapted the technology to color imaging, which already has three types of pixels -- red, green and blue. The technology assigns pixels of each color all the different exposure rates. For example, a color imaging camera spanning four different exposures would have pixels of 12 different combinations of color and exposure, and the different types of pixels would be evenly distributed.

The next step is building a prototype camera, said Nayar. The camera will be capable of four simultaneous exposures and should be done within a year, he said.

The technology will prove useful for taking pictures in extreme lighting conditions, said Narendra Ahuja, a professor of engineering and computer scienceclose at the University of Illinois at Urbana-Champaign. "It provides a nice, useful way of trading off ... sharpness... to capture a large brightness range. Previous technologies have provided other tradeoffs -- they help achieve larger brightness range at the expense of more hardware, more imaging time [or] different manufacturing processes. Nayar's technology presents the user with a useful new option."

The researchers are also looking into adapting the technology for further uses, including medical imaging, said Nayar. It could be used in any imaging system, including video, electron microscopes and medical imaging equipment like x-rays, he said.

Nayar's collaborators on the technology were Tomoo Mitsunaga, a visiting scientist at Columbia from Sony Corp., and Columbia graduate student Srinivasa Narasimhan. Funding for initial work on the project was provided by DARPA and the Office of Naval Research (ONR).

Timeline: < 1

Funding: Government, University

TRN Categories: Computer Vision and Image Processing

Story Type: News

Related Elements: Photo 1; Photo 2; Photo 3; Technical paper, "High Dynamic Range Imaging; Spatially Varying Pixel Exposures," proceedings of June 13-15, 2000 IEEE conference on Computer Vision and Pattern Recognition.

Advertisements:

September 27, 2000

Page One

Ants solve tough problems

Camera gets all the exposure

Coated specks form nano building blocks

Sturdy virus makes tiny container

Freezing speeds rapid prototyping

News:

Research News Roundup

Research Watch blog

Features:

View from the High Ground Q&A

How It Works

RSS Feeds:

News

Ad links:

Buy an ad link

| Advertisements:

|

|

Ad links: Clear History

Buy an ad link

|

TRN

Newswire and Headline Feeds for Web sites

|

© Copyright Technology Research News, LLC 2000-2006. All rights reserved.