Multicamera surveillance automated

Imagine a bank of cameras trained at different

angles on many objects, such as people at an airport. It takes time for

an operator to look at the images in each camera, see if a particular

object of interest is in that image, then manually zoom in to take a closer

look.

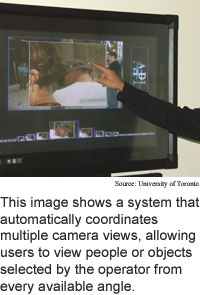

Researchers from the University of Toronto have developed a system

that allows the user to indicate an object in one view and automatically

zoom to that object in all other views. The system sorts all the camera

views according to the quality of the view and zooms into all the images

that contain the object in about half a second in a system containing

as many as 200 cameras, according to the researchers.

The system can be used anywhere where multiple cameras are used

for surveillance, such as airports or casinos. It can also be used to

speed the process of parsing through a large number of still pictures

of a scene, such as archeological and crime scene pictures.

The researchers are working on adding real-time object tracking

to the system so it could to follow an object chosen by the user.

The system coordinates the multiple views using the three-dimensional

spatial location of the object of interest, which it finds using the visual

input plus sound localization information from an array of microphones.

The basic method is ready for practical applications now; real-time

object tracking will be ready for commercial use in about a year, according

to the researchers. The work appeared in the proceedings of the 2004 IEEE

International Conference on Systems, Man and Cybernetics held in October

in behavior, Netherlands.

For pure nanotubes add water

Solar cell doubles as battery

Conversational engagement tracked

Pure silicon laser debuts

Briefs:

Tight twist toughens nanotube fiber

Multicamera surveillance automated

Chemical keeps hydrogen on ice

Smart dust gets magnetic

Short nanotubes carry big currents

Demo advances quantum networking

Research Watch blog

View from the High Ground Q&A

How It Works

RSS Feeds:

News

Ad links:

Buy an ad link

Ad links: Clear History

Buy an ad link

|

TRN

Newswire and Headline Feeds for Web sites

|

© Copyright Technology Research News, LLC 2000-2010. All rights reserved.